By Adam Wilson, CEO of Trifacta

I’m impatient. I admit it. My first thought is often, “Oh, there’s gotta be a faster, better way to do this.” It’s a big reason why I became a data engineer in the first place: so I could build a better way.

When I hear about people hesitating to get vaccinated for COVID-19 because the vaccines were developed “too quickly,” I understand why they might think that, but I also understand how the approach, particularly with respect to wrangling the critical data, was very different for COVID-19. I’m not a scientist, so I won’t comment on the clinical trial research or regulatory review process that resulted in the fastest vaccine rollout in history. But I am a data engineer, and I have seen how data has been curated and shared at unprecedented speed and scale to help researchers and health organizations worldwide respond to COVID-19 outbreaks, develop better prevention strategies, and advance scientific discoveries. I’d like to share three examples with you.

Putting Data Quality on the Map

For the U.S. Centers for Disease Control and Prevention (CDC), it all starts with putting dots on a map. Lots and lots of dots. Person A had some kind of contact with Person B at Location X.

Fine-grain resolution maps of transmission dynamics are indispensable in controlling infectious diseases. In the case of HIV, like the outbreak in rural Indiana in 2015, transmission dynamics mean needle sharing or sexual contact. With COVID-19, this could mean a single face-to-face conversation between two people at a cash register or prolonged contact over time among many children and staff in a network of child care facilities in Salt Lake City, Utah.

But to understand who’s connected to whom and see how different outbreaks and transmission chains spread across a certain locale, computational biologists at the CDC needed to turn seemingly endless rows of names, addresses, dates, and other data points into sleek, visual maps.

Is the address 2400 N DRUID HILLS RD NE the same place as DRUID HILLS TARGET? Is IGNACIO RODRIGUEZ the same person as NACHO RODRIGUES? Is 2020/03/04 the date for March 4, 2020 or April 3, 2020? Data quality issues like these can seem small and niggling, but have enormous consequences when it comes to tracking COVID-19 cases, particularly in bidirectional contact tracing.

When lives are at stake, speed and accuracy are critical. Data engineering cloud technology automates the tedious, time-consuming, labor-intensive data cleanup work. Not only can outliers in the data be pinpointed and standardized, and errors be corrected at breathtaking speed and scale, but this smart technology learns as it goes, suggesting corrections along the way and preventing faulty data from compromising the CDC’s mapping outputs.

Data Sharing Is Caring

At the Infectious Diseases Data Observatory (IDDO) at the University of Oxford, contributors from all over the world collect and share data about poverty-related diseases like malaria, as well as COVID-19. Regulatory bodies and policy makers share and analyze datasets in the IDDO repository to work together in developing and publishing new treatment guidelines.

IDDO’s work has convinced me that the ultimate weapon in the fight against infectious diseases isn’t data itself—it’s the sharing, curation, and harmonization of data.

The IDDO data platform hosts one of the largest international collections of clinical data related to COVID-19. Thousands of independent hospitals and health institutes worldwide have shared their individual patient data, treatment data, symptom data, and microbiology data. The data comes in as everything from simple spreadsheets to exports from sophisticated statistical packages which require standardization in order to be useful.

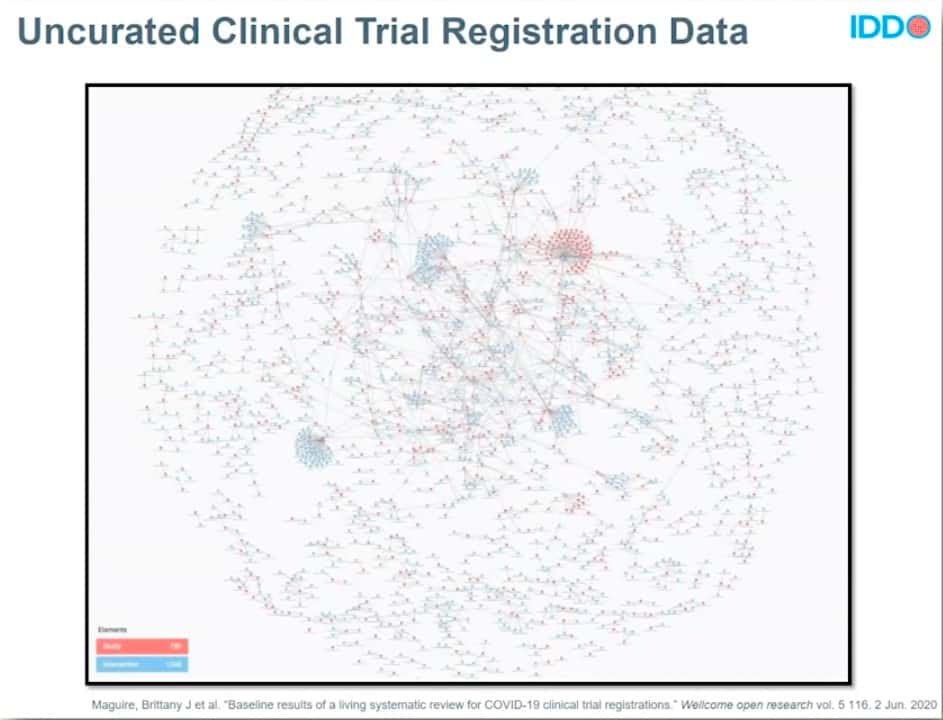

In parallel, IDDO is also working to analyze and share information on all of the COVID-19 clinical trials registered across the international study registries. The lack of standardized data capture and nomenclature from country to country makes understanding and using these data very difficult. At one point, IDDO identified more than 35,000 distinct terms for drugs. This precious COVID-19 clinical trial data, desperately needed at a time of global pandemic and great suffering, was a mess.

BEFORE DATA CURATION

Image credit: https://wellcomeopenresearch.org/articles/5-116

To untangle this massive, messy hairball of data, IDDO relies on data engineering cloud technology. It lets IDDO do the data dirty work—curating the data, standardizing it according to common codes and practices, and harmonizing it to create clean, usable datasets that researchers worldwide can see and share.

AFTER DATA CURATION

Image credit: https://wellcomeopenresearch.org/articles/5-116

The result? COVID-19 researchers now know who’s collecting what type of data and where. They can see the clinical trials are already underway so they don’t waste time on redundancies. And they get a massive head start on their work with clean, standardized, readily available datasets.

Building Data Pipelines to New Genetic Discoveries

Genomics England partnered with the GenoMICC Consortium, led by the University of Edinburgh, to rapidly sequence the whole genomes of people affected by COVID-19, and to provide a new research environment for researchers to understand the role of genetic risk factors in patient responses to COVID-19.

Their successful earlier work on the 100,000 Genomes Project gave Genomics England a head start with genomic sequencing data from 100,000 people with cancer or rare diseases. Tens of thousands of patients diagnosed with asymptomatic, mild, severe, and long-haul cases COVID-19 were recruited to contribute to Genomics England’s data ecosystem. And they decided the best and fastest way to stand up a new research environment was to build it in the cloud.

Genomic data has to be supplemented with clinical data from the National Health Service (NHS), Public Health England, and other providers to provide context for research. Some of these datasets are mind-bogglingly huge. A single 10-gigabyte dataset from the NHS on hospital statistics was 138 fields wide and more than 3 million rows long! Data on this massive scale streams into the cloud environment where it’s stored, de-identified, cleaned, profiled, standardized, and automatically pipelined out to researchers.

Genomic England’s ability to harness the power of data and data engineering cloud technology may lead to the discovery of genetic risk factors and new treatments for COVID-19 patients. This kind of breakthrough work, at breakneck speed, wouldn’t have been possible even a few years ago.

I’m still an impatient person. But I’m also hopeful. A vaccine for COVID-19 was developed in record time. While COVID variants may always be with us, I’m confident that data—and technologies created to radically improve the productivity of people who work with data—will help researchers and health organizations worldwide to collaborate, experiment, iterate, and quickly find ways to prevent, treat, and cure infectious diseases like COVID-19.

Adam Wilson is CEO of Trifacta and has more than 20 years of experience in data integration and analytics. Under his leadership, Trifacta has delivered the industry’s first data engineering cloud that leverages decades of innovative research in human-computer interaction, scalable data management, and machine learning to make the process of preparing data and engineering data products faster and more intuitive.

The Editorial Team at Healthcare Business Today is made up of experienced healthcare writers and editors, led by managing editor Daniel Casciato, who has over 25 years of experience in healthcare journalism. Since 1998, our team has delivered trusted, high-quality health and wellness content across numerous platforms.

Disclaimer: The content on this site is for general informational purposes only and is not intended as medical, legal, or financial advice. No content published here should be construed as a substitute for professional advice, diagnosis, or treatment. Always consult with a qualified healthcare or legal professional regarding your specific needs.

See our full disclaimer for more details.